Evolution

of Big data

Let’s dive today

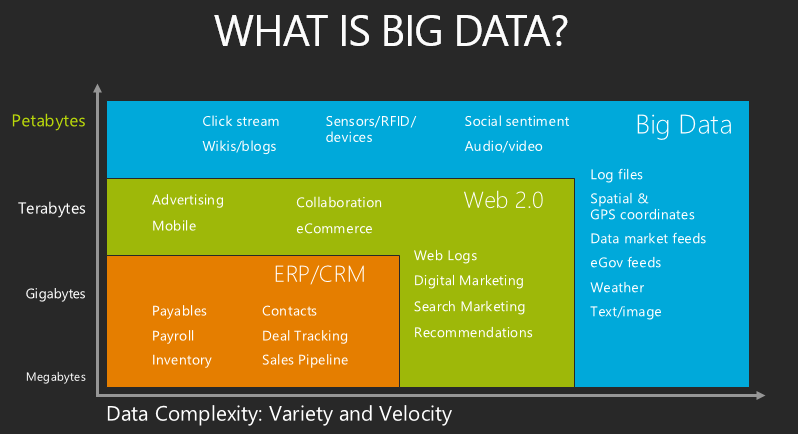

about the real time examples. The enormous data growth now presented a big

challenge for the organizations who wanted to build intelligent systems based

on the data and provide near real time superior user experience to their

customers. Data was received from the transaction system and overnight was

processed to build intelligent reports from it. This kind of approach is a data warehouse

concept where it had its own share of challenges whenever unstructured data is

stored. Now the traditional database has limits to store large volumes

of data that comes in variety. Hence to cover this gap, big data science

evolved with the idea of capturing the data and present to the customers in an

innovative approach.

What is the role of Cloud computing in Big Data?

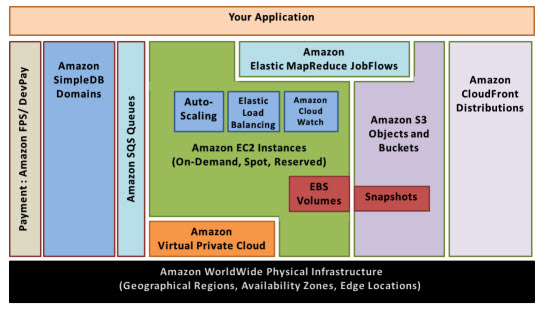

Cloud computing- It’s one of the hottest buzzword in IT world. It refers to

use of scalable public computer network for data storage and to perform

computing tasks. One of the easiest example to explain cloud computing is

Dropbox. It allows us to store and access your documents via internet

connection.

In the cloud

architecture there are 3 models developed over time;

Private cloud – As the word suggests, its intended for one

organization and do not share physical resources. Data center is managed

internally.

Public cloud – This one is developed by commercial providers

like Amazon, that hides the complex infrastructure and provides various

resource services.

Hybrid cloud – It is mix of both Private and Public cloud

that helps in achieving security, elasticity and cheaper load capabilities. It

can have huge impact to organizations if they are serviced poorly.

Cloud and Big Data – characteristics

Below is the list

of characteristics of cloud computing

·

Sc ability

·

Elasticity

·

Low cost

Things

to watch out in future:

IT companies rely

heavily on cloud computing integrated solutions to deliver quality projects

that delivers business needs. There are few things to consider when deploying

big data on cloud solutions.

·

Check on Data Integrity issues

·

Initial cost

·

Performance issues

·

Data Access security requirements

Use case Example for Big-Data

Let’s consider a

Retail Industry and how big data rules Retail sector

In the past we

know that stores that reacted to the demand where growing vastly. But today due

to rapid growth of technologies top retailers rely on Big data to gain a

competitive advantage.

Big data helps to

focus on:

Ø Predicting trends in the market and preparing for future

demand

Ø

Real time analytics helps the company to synchronize prices

hourly with demand.

Ø

Performs deeper analytics on all data to find hidden

insights.

Big Data analytics is now being

applied at every stage of the retail process – working out what the popular

products will be by predicting trends, forecasting where the demand will be for

those products, optimizing pricing for a competitive edge, identifying the

customers likely to be interested in them and working out the best way to

approach them, taking their money and finally working out what to sell them

next. So big data is useful in other industries too. Take a look at the below

examples

· Starbucks

Earns more customer credit by giving new promotions and deals where customer

can access via mobiles.

·

Hotel

Chain Uses Big Data to Increase bookings.

Why Big data and Analytics projects fail?

Business analytics projects tend to

make big promises. Among them, the projects propose to give executives a better

understanding of their current business environment and to help them anticipate

future business conditions; to facilitate more predictive and prescriptive

decision-making; The success and failure depends upon the execution of

the data in a methodical approach. It’s based on too many factors like Infrastructure,

right tools based on your business application.

There is some

major reason for that. Take a look below:

·

Lack of miscommunication between data analyst and the top

management level. Frequent communications may reduce the bridge gap on implementation

snags and risk of unfortunate downstream surprises.

·

Next

is when the company has ineffective access to clean and reliable that. The data

need not be spotless, but it can be cleaner with few data issues. Data maturity

is what an organization must look for when implementing big data.

·

When the

organization starts to focus on technology rather than business opportunities

and when they fail to provide data access to subject related matter.

·

For

a project to be successful an organization must ensure that it has uncommitted

stakeholders that ultimately drives business results. In case if there are any

absence of stakeholders it might lead to poor insights and bad decision making

skills.

·

Lacking

the right skills is an another woe. For new technologies like hadoop skilled

and efficient programmers are require to make a project successful.

See you soon on my next blog!!!

See you soon on my next blog!!!

No comments:

Post a Comment